Introduction

📄 Read the full whitepaper

Learn more about the strategic and economic benefits of AI phone agents, ROI calculations, use cases, technology stack, implementation blueprint, and legal considerations.

- Handle a wide range of inquiries

- Adapt to conversational nuances

- Provide responses that feel natural and engaging

How Famulor Works

Famulor’s voice AI system uses cutting-edge technology to create an unparalleled customer experience through dynamic, conversational interactions. Unlike conventional intent-based dialogue systems based on Natural Language Understanding (NLU) models, Famulor relies on generative Large Language Models (LLMs) to deliver responses that feel natural, flexible, and human-like.From Intent-Based Systems to Conversational AI

Intent-based systems are designed to recognize specific inputs and map them to pre-programmed “intents.”- Advantage: Effective for predictable, repetitive interactions.

- Disadvantage: Limited flexibility, can feel robotic when deviating from the script.

- Utilizes advanced models from OpenAI, Meta (LLaMA), Mistral, and Anthropic.

- Adapts in real time to the unique phrasing and needs of each interaction.

- Resembles a human employee who follows guidelines but responds dynamically.

This human-like approach makes Famulor especially well suited for sales calls and more complex support interactions.

Request a Free Demo ⚡

Experience the future of telephony live

Create Agent

Start now with your first AI agent

How Large Language Models (LLMs) Work

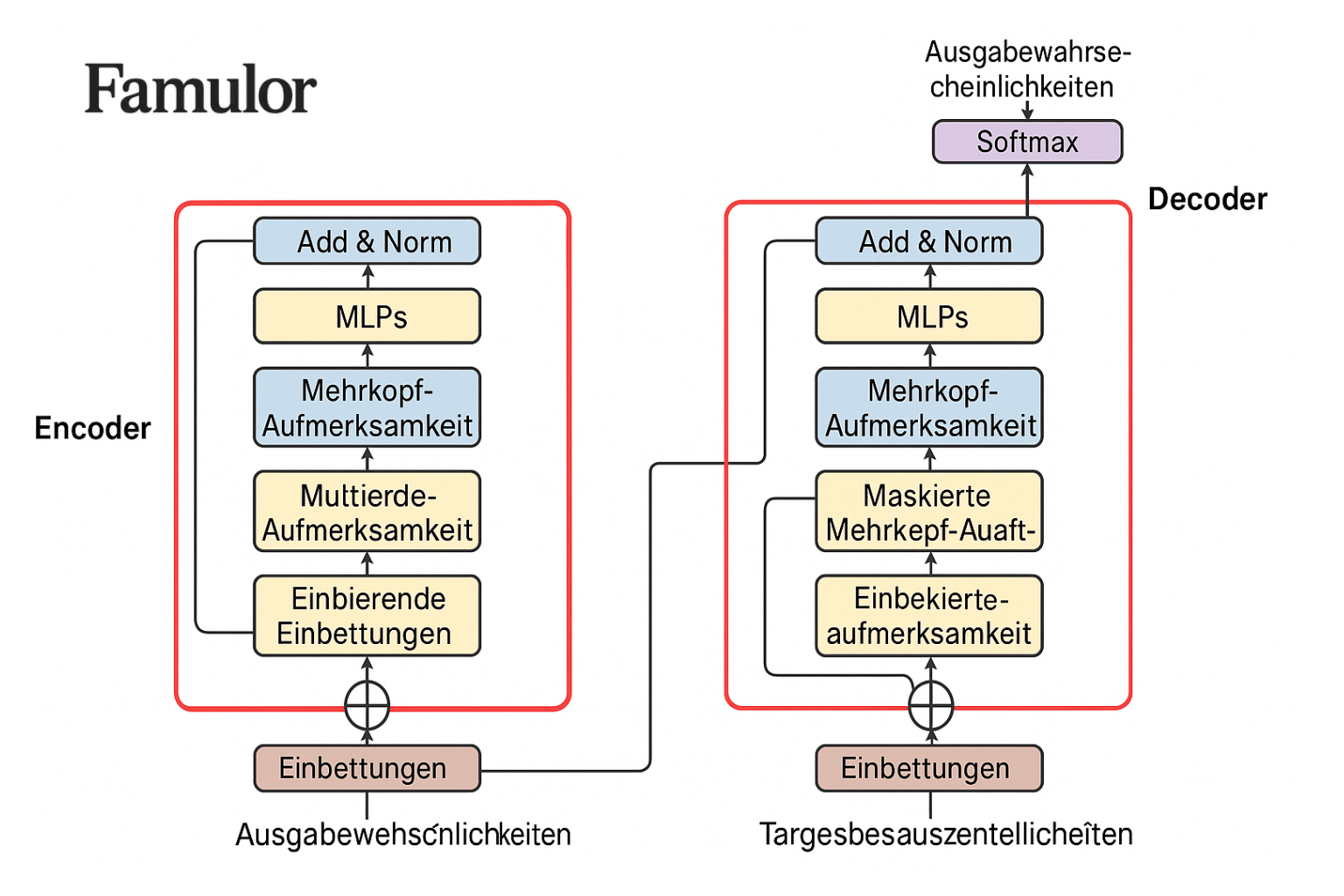

At the core of Famulor’s conversational AI are LLMs, which operate fundamentally differently from rule-based systems. They use a neural network architecture called the Transformer.

-

Self-attention and context awareness:

A mechanism that helps the model dynamically “focus” on relevant parts of the input text. This enables understanding of context and nuances across the entire conversation. -

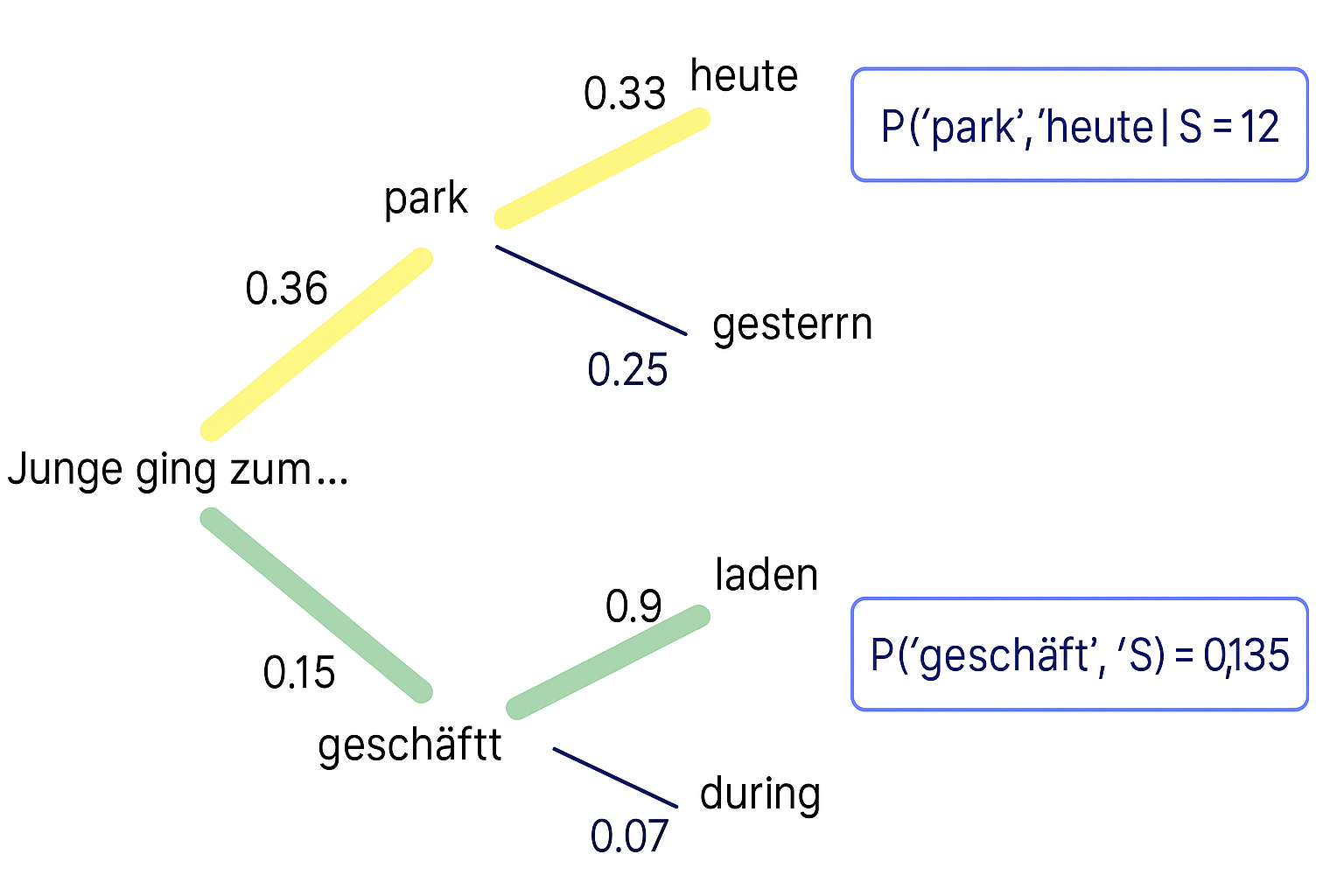

Probabilistic response generation:

LLMs evaluate multiple possible next words and choose one based on the probability within the context. This makes every response unique and human-like.

-

Training on massive datasets:

The models are trained on huge datasets, making them highly flexible without needing explicit programming for each scenario.

Since responses are based on probabilities, 100% predictability is statistically unlikely—similar to human conversations. However, this is what enables the natural dynamics.

Voice AI: Generative Speech

Famulor’s AI system goes beyond text comprehension. Once the LLM generates a response, we use Transformer-based voice AI text-to-speech (TTS) models to convert the text into audio in real time. This variability in voice rendering makes interactions feel more natural. Just like with human phone calls, no two Famulor conversations are ever exactly the same—from what is said to the tone of voice.Why 100% Coverage Is Statistically Unlikely

Due to the nature of LLMs, 100% coverage is statistically unlikely:- Probabilistic nature: Responses are based on statistics, not fixed paths.

- Context sensitivity: Subtle variations in tone or phrasing can influence the answer.

- Broad language understanding: The ability to comprehend nearly anything means unforeseen contexts can arise.

Managing Uncertainty

For use cases requiring strict consistency, Famulor recommends:- Define rules: Give the voice agent clear guidelines and “no-go” vocabulary.

- Human fallback: A live agent can take over if scenarios become too complex.

- Product guardrails: Famulor minimizes risks with built-in safety mechanisms.

How to Train Your Famulor Voice Agent

To maximize the effectiveness of your Famulor Voice Agent, we recommend a strategic training approach.Define the Happy Path (>50% coverage)

Start with the most frequent scenarios. Focus on interactions that make up about 60% of expected calls. This lays a solid foundation.

Expand to edge cases (90% coverage)

Identify less common requests or unusual phrasing. Test internally with your team to gather feedback and close gaps.

Go live and monitor (30-day evaluation)

Closely monitor calls during the first 30 days. Collect real-world data to see how the agent performs.

Refine for 99%+ coverage

Use insights gained to further train the agent or update information. This is an iterative process.